"ChatGPT is a computer program that can chat with you like a human and understand the meaning behind your words. You can interact with ChatGPT by typing in questions or statements, and it will respond with its best understanding of what you're saying. It's important to note that while ChatGPT is highly advanced, it's still a machine, and its responses may not always be perfect." Whilst ChatGPT is very efficient at describing itself clearly and honestly, it is important to have a real life expert explain the pros and cons of this game-changing AI technology.

With a Master’s degree in Big Data and Business Analytics, Visiting Lecturer Nicolas Maréchal teaches the fundamentals of Computational Thinking and Python Programming at EHL. Today, he guides us through the future AI landscape.

1. What is ChatGPT and where does it originate from?

ChatGPT (Chat Generative Pre-Trained Transformer) is an AI language model (also referred to as an AI-powered chatbot and coding assistant) launched by OpenAI in November 2022. In just two months, ChatGPT reached over 100 million monthly active users, estimated to be the fastest-growing internet service ever. To put it in perspective, it took three and a half years for WhatsApp and nine months for TikTok to achieve the same performance.

ChatGPT is a state-of-the-art Natural Language Processing (NLP) model, based on the Transformer technology (introduced in the paper "Attention is all you need", 2017) that is designed to generate human-like text responses to questions and prompts. It has been built on top of GPT-3 (Generative Pre-Trained Transformer 3), and more precisely on top of “Davinci”, another language model developed by OpenAI in May 2020.

ChatGPT however is not the first of its kind. BERT (Bidirectional encoder Representations from Transformers) developed by Google in 2018, was the first very successful transformer-based language model.

Amongst others, ChatGPT can be used for natural language processing (NLP) oriented tasks such as text generation, language translation and text summarization. It can also be used for specific applications such as question answering, sentiment analysis and text classification. Overall, ChatGPT is a powerful tool for any application that involves generating or understanding human language.

OpenAI’s recent $10 billion deal with Microsoft, and Google’s new chatbot ‘Bard’ (powered by LaMDA), unveil a new chapter in the Tech giant’s AI search war, and more breakthroughs are expected to quickly emerge in this field. We have an exciting time ahead of us!

2. How does ChatGPT work?

ChatGPT works by training on large amounts of text data (175 billion parameters gathered from the internet and other sources) to learn patterns and relationships between words and phrases in the language, and then uses this knowledge to generate human-like responses to a given input. When a user provides input to ChatGPT, the model processes it and generates a response based on the most likely sequence of words, given the input and its training.

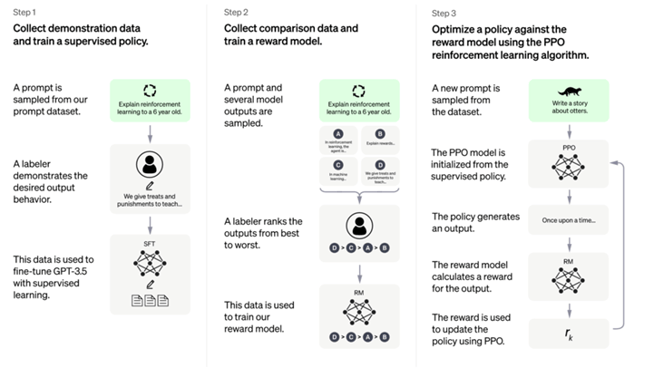

One major difference between GPT-3 and ChatGPT is the use of reinforcement learning from human feedback (RLHF), whose process can be divided into three parts: 1) Supervised fine-tuning (SFT model), 2) Train reward model (RM), 3) fine-tuning the SFT model with reinforcement learning (technical details in appendix).

Reinforcement learning (RL) is very useful, as it specializes in a model's predictions on human preferences, optimizing weights on metrics that better reflect human judgment than aseptic loss functions. RL also allows the use of otherwise unusable metrics (because not differentiable), such as human preferences in the form of reward functions.

ChatGPT, like other language models, also uses the principle of "memorization" to generate text. This means that the model has seen a vast amount of text data during its training process and has memorized the patterns and relationships between words and phrases in that data. When the model generates text, it uses the information memorized about the language to make predictions about the next word/token or sequence of words based on the specific input provided. The model can then generate a wide variety of outputs, (such as one-word answers or complex paragraphs), based on the information it has stored in its memory.

It's essential to note that the model doesn't understand the meaning of the text in the same way a human would. Instead, it relies on statistical associations between words and phrases to generate text. As an illustration, consider the sentence: "The Roman Empire [MASK] with the reign of Augustus."

A probabilistic model might predict "began" or “ended”, as both words score a high likelihood of occurrence, but have a strictly different meaning. Hence, predicting the next word (or a masked word) in a text sequence, may not necessarily be learning some higher-level representations of its meaning.

3. How to use ChatGPT and what value does it provide?

The value of ChatGPT to the public resides in its ability to generate human-like text based on the user’s input, making it very useful for a variety of applications and use cases. Some examples, leveraging ChatGPT’s API or its interface, include:

- Software development: ChatGPT can be used in the text-to-code approach and vice versa. It can also act as a search engine, such as StackOverflow.

- Conversational AI: ChatGPT can be used to build conversational AI systems for customer service or personal assistants, allowing for more natural and human-like interactions between users and machines.

- Content generation: ChatGPT can be used to generate creative writing, advertisements, personalized emails, news articles and other types of text, which can save time and resources for marketing departments or freelancers, compared to manual writing. The market for AI-assisted content creation is already quite mature (e.g., jasper.ai, craftly.ai, sembly.ai, poised.com, donotpay.com).

- Text summarization: ChatGPT can be used to generate a summary of a longer text, making it extremely useful for summarizing academic research, news articles, scientific papers, etc.

- Language modeling: Even if a model like BERT would be more suited for fine-tuning specific tasks, ChatGPT can use zero-shot learning (predict the answer given only a natural language description of the task) and few-shot learning (in addition to the task description, the model sees a few examples of the task), and it will learn how to do it. Examples of applications include sentiment analysis (deciding whether the sentiment is positive, neutral, or negative), question answering, etc.

4. ChatGPT and education

ChatGPT and other advanced language models have proven to have a significant impact on education, providing new and innovative ways to personalize learning, generate content and support students as they learn.

- Personalized learning: ChatGPT can be used to generate personalized learning materials and assessments, adapting to the needs and abilities of individual students.

- Automated grading: ChatGPT can be used to grade written assignments, freeing up time for teachers to focus on other tasks and allowing for faster, more efficient grading.

- Conversational tutoring: ChatGPT can be used to build conversational tutoring systems, providing students with immediate feedback and support as they learn.

- Textbook generation: ChatGPT could generate textbooks and other educational content, making it possible to quickly create high-quality materials for a wide range of subjects.

But there are also some potentially negative impacts to consider:

- Over-reliance on technology: ChatGPT and other AI systems can become a 'crutch', potentially reducing the need for critical thinking and creativity in the classroom.

- Bias and fairness: As with any AI system, the training data used to develop ChatGPT can contain biases that are reflected in the model's output. This can have a negative impact on education by perpetuating stereotypes and inaccuracies.

- Lack of human interaction: ChatGPT and other language models lack the empathy and emotional intelligence of human teachers and tutors, potentially reducing the quality of the educational experience for students.

- Fact-checking errors: ChatGPT can make fundamental mistakes and very often inaccuracies. Students (and the public in general) should not solely rely on ChatGPT for any important given tasks.

While politicians are raising concerns about the educational public systems lacking technological maturity (not even AI), some argue that “The College Essay is Dead”, illustrating how big the impact of ChatGPT is on education and why it cannot be ignored.

5. Where is this new technology heading?

Since the past decade, Generative Pre-Trained Transformers (GPT) have been rapidly advancing and many more breakthroughs are expected in this field. Some potential directions on the technology development include:

- Increased sophistication: GPT models are likely to become even more advanced and capable of leveraging more parameters, probably incorporating new techniques and technologies to improve their ability to generate human-like text.

- More applications: The technology is expected to continue expanding into new domains, with potential applications in areas such as voice-based interaction, video generation and others.

- Integration with other technologies: GPT models are expected to be integrated with other advanced technologies, such as computer vision (image analysis) and robotics. They are currently being built into Microsoft’s Office software and their Bing search engine.

- Ethical considerations: As GPT’s impact on society increases, ethical considerations are likely to become more prominent, with a focus on ensuring that the technology is used in ways that are responsible, fair and equitable.

Note: GPT-4 is not an officially announced or released product by OpenAI. It is a reference to a potential future iteration of the GPT language model series (which is currently at version 3), which is likely to be trained on many more parameters, using more accurate and robust models.

6. AI text detector

GPTZero (built by Edward Tian at Princeton University) is a recently developed tool designed to detect AI-generated text. It uses machine learning algorithms to analyze text and determine if it was written by a human or generated by a language model such as ChatGPT. OpenAI themselves also launched a similar classifier trained to identify AI plagiarism.

Amongst others, perplexity is a metric used by GPTZero to evaluate the quality of a language model's predictions. It measures how well a model predicts the likelihood of a given sequence of words (formally the average per-word log probability). Human-generated texts tend to have a higher average perplexity and higher burstiness (perplexity is not uniform across sentences). Hence, it is possible to detect if a human or a machine created specific portions of texts.

Whether or not GPTZero can live up to its promise to detect AI-produced written text depends on a variety of factors, including the accuracy of the underlying machine learning algorithms and the quality of the training data used to develop the model. In general, tools like GPTZero can be effective at detecting AI-generated text in certain cases, but they are not ‘foolproof’ and can sometimes produce false positive or false negative results.

It is important to keep in mind that the field of AI-generated text detection is rapidly evolving, and new and more advanced tools are always being developed. The effectiveness of GPTZero and other similar tools may certainly improve in the future as the technology continues to advance.

Appendix

One major difference between GPT-3 and ChatGPT is the use of reinforcement learning from human feedback (RLHF), whose process can be divided into three parts:

- Supervised fine-tuning (SFT model)

- Human labelers write down the expected output response of a list of prompts (12-15k data points, presumably)

- GPT is trained to predict human response.

- Train reward model (RM)

- A list of prompts is selected and the SFT model generates multiple outputs for each prompt.

- Labelers rank the outputs from best to worst. The size of the resulting dataset is approximately 10 times bigger.

- Train the Reward Model (RM). It takes the SFT model output and ranks them in order of preference.

- Fine-tune the SFT model with reinforcement learning

- Reinforcement Learning is now applied to fine-tune the SFT policy by letting it optimize the reward model.

- The specific algorithm used is called Proximal Policy Optimization (PPO)

Source: https://dineshyadav.com/chatgpt-explained/

Source: https://dineshyadav.com/chatgpt-explained/

EHL Visiting Lecturer